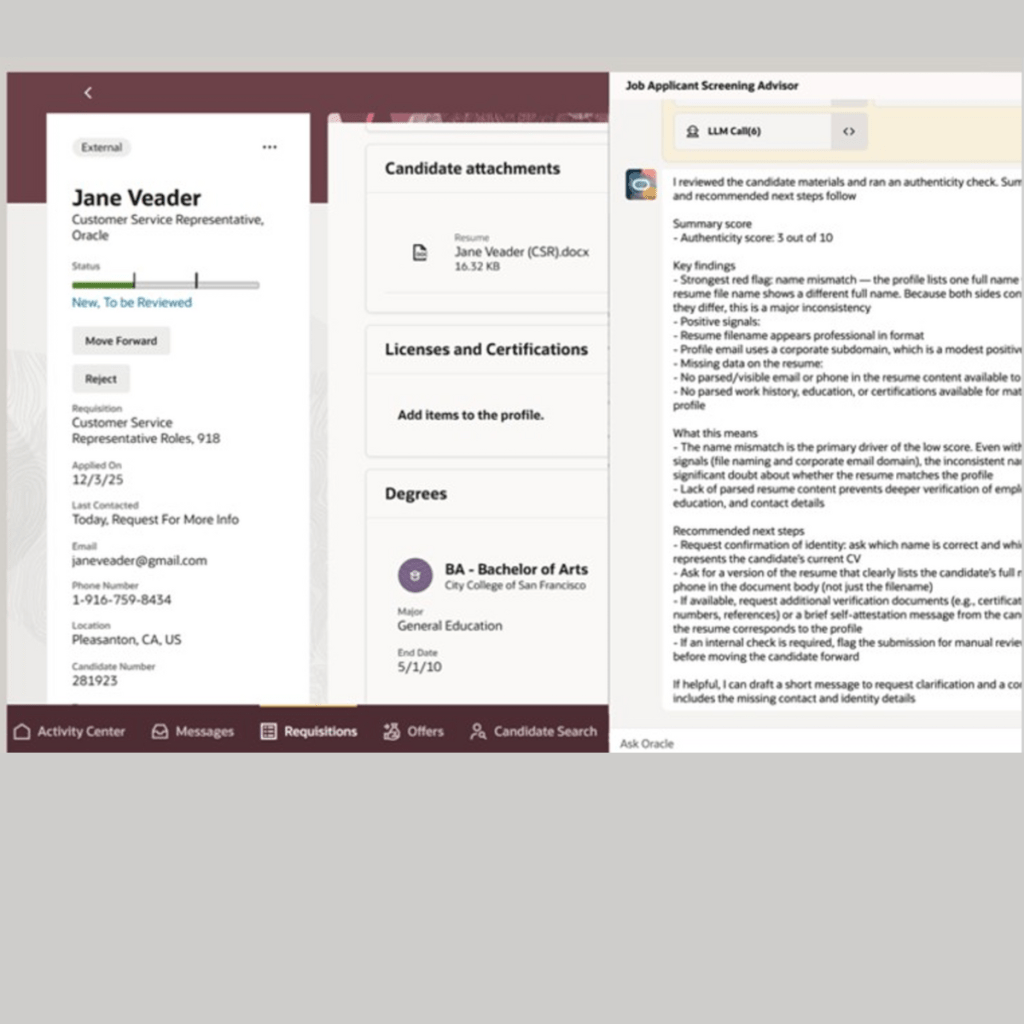

Hiring at scale comes with a new challenge: How do you confidently identify genuine candidates in an age of AI-generated profiles?

With 26A, Oracle enhances the Job Applicant Screening Advisor Agent with AI-powered candidate authenticity verification.

𝗪𝗵𝗮𝘁’𝘀 𝗲𝗻𝗵𝗮𝗻𝗰𝗲𝗱?

The agent now:

✔Analyses candidate profiles and resumes using multiple given and derived signals

✔Detects potential fraudulent or AI-generated submissions

✔Applies a customisable authenticity rubric

✔Delivers an authenticity score (0-10) with a clear explanation

✔Recommends actionable next steps for recruiters

𝗕𝘂𝘀𝗶𝗻𝗲𝘀𝘀 𝗶𝗺𝗽𝗮𝗰𝘁

✔ Automatically flags high-risk or suspicious applications

✔ Reduces manual screening effort for recruiters

✔ Improves quality of shortlists and hiring decisions

✔ Brings transparency into AI-driven screening

✔ Aligns with responsible AI & governance principles

This enhancement is a great example of responsible AI in action – not replacing recruiter judgment, but augmenting it with explainable, configurable intelligence.

A great example of how AI agents can solve real hiring challenges when designed with trust, clarity, and accountability at the core.

Curious to see how organisations will tune these rules based on roles and geography.

Leave a comment